NGVS MegaPipe documentation

| Introduction |

- Stacking procedure

- File types

- Naming convention

- A note on gain

- Back to the NGVS graphical user interface

| Stacking procedures |

The first part of the stacking procedure is the astrometric/photometric calibration of the Elixir-processed input images. This is almost identical to the procedures described on the MegaPipe documentation. (Go here for the astrometry and here for the photometry.) The SDSS is used for both the astrometric and photometric calibrations. When processing the Elixir-LSB data the photometric calibration is determined on the scale of the mosaic. That is, a single scaling factor is applied to all 36 CCDs. This means that the small (0.03-0.06 mag) photometric zero-point variations across the MegaCam mosaic are not corrected. (see this page for a full discussion). However, a global photometric scaling is required because the nature of the Elixir-LSB; if different scaling factors are applied to different chips the surface brightness levels would not match up across chip boundaries. The two methods that do not use Elixir-LSB data determine the photometric zero-point on a chip-by-chip basis and will have slightly superior photometry.

The next steps are background subtraction and stacking. MegaPipe has implemented three separate methods:

- Local background subtraction, median combine (Ml128): This

is the standard MegaPipe procedure. SWarp is run on the normal Elixir

processed images. SWarp removes the background on a local 128x128

pixel grid. Because of this objects which are about this scale or

large are effectively removed from the images. Obviously this highly

undesirable if one studying the structure of M87 or any of the

larger galaxies. However, objects like small galaxies, stars and

globular clusters are unaffected. This method provides the best

background removal (provided one is not interested in large extended

objects), and because the photometric zero-point is computed on a

chip-by-chip basis, this method will provide the best photometry.

- MegaPipe global background subtraction version 2, artifical skepticism combine (Mg002)

In this case, the background of each input image is removed globally,

that is on the scale of the whole mosaic. The background subtraction

is done by using available MegaCam images to build up a general

background map. This map is scaled to the appropriate sky level and

the subtracted from the NGVS images. A detailed description can be

found here. The background subtracted

images are combined using artifical skepticism (descibed in the

following section). This method does not do quite as good a job at

background substraction as the Elixir-LSB methods described below.

However, it provides superior point-source photometry, for the

reasons noted above. Also, while Elixir-LSB can only be done if the

images are taken in a specified sequence, this method can be applied

to any image. This is the best method available for the short exposure

images.

- Elixir-LSB global background subtraction, artificial skepticism combine (Mg004):

In this case the Elixir-LSB images are stacked. The Elixir-LSB images

produced by Jean-Charles Cuillandre have the background variations

removed, but still retain a constant background level. MegaPipe

removes this constant level from each input image. The background

level is determined for each chip the mode of the pixel values. The

mode is a true mode (not 3*median - 2*mean) determined by method

described here

Different chips can have quite background levels; bright galaxies and

scattered light from bright foreground stars will raise the background

level locally. The lowest background level, that is to say, the

background form the least contaminated chip is used as a constant

background level for the whole mosaic. Typically, if the field is

uncontaminated, the difference between the darkest and brightest chip

is only about 0.1% or so.

Having removed the background, the stacking then proceeds as normal. SWarp is used to resample the images. While using a median for combining the images provides excellent rejection of cosmic rays and other outliers, it increases the noise in the output images slightly. The typical decrease in depth is about 0.16 magnitudes. Artificial Skepticism (Stetson 1989) is a method of computing a robust average image using a continuous weighting scheme that is derived from the data themselves. The weights are given by:

where wi is the weight of the i-th input pixel, σi; is the standard deviation (determined by the read noise and gain), ri is the residual of i-th input pixel with respect to the current average value. The method starts by using a median as the starting point. Weighted averages are then computed using the equation above iteratively. Pixel values that fall far from the average will be given less weight (ri is larger so wi becomes smaller) in each successive iteration. The equation can be tuned using the free parameters α and β. Here they are set to α=1 and β=2, as used the WFPC2 pipeline. After a few iterations (both MegaPipe and the WFPC2 pipeline stop at 5 iterations) the procedure converges. If there is a defective pixel (e.g affected by a cosmic ray) in the list, the weight for the pixel will be such that it contributes negligibly to the output. If there are no defective pixels, all the pixels will be weighted roughly equally. This is different then a sigma clipping method in that there is no hard, discrete cutoff defining when a pixel is "good" or "bad". Pixels are rejected gradually as they deviate from the central value.

Examining the output images, one sees excellent cosmic ray rejection. Experiments show that the depth of images combined with artificial skepticism is within 0.08 magnitudes of those using a blind average.

In practice, for the NGVS data, the images are treated as in the previous method up until the SWarp stage. The images are calibrated and the constant background is removed. as before. SWarp is than used to re-map each of the input images to a common pixel grid, but not to combine them. The remapped images are then combined using gcomb a Fortran implementation of the artificial skepticism algorithm. (The python implementation used by STScI is too slow for 20000x20000 pixel images.).

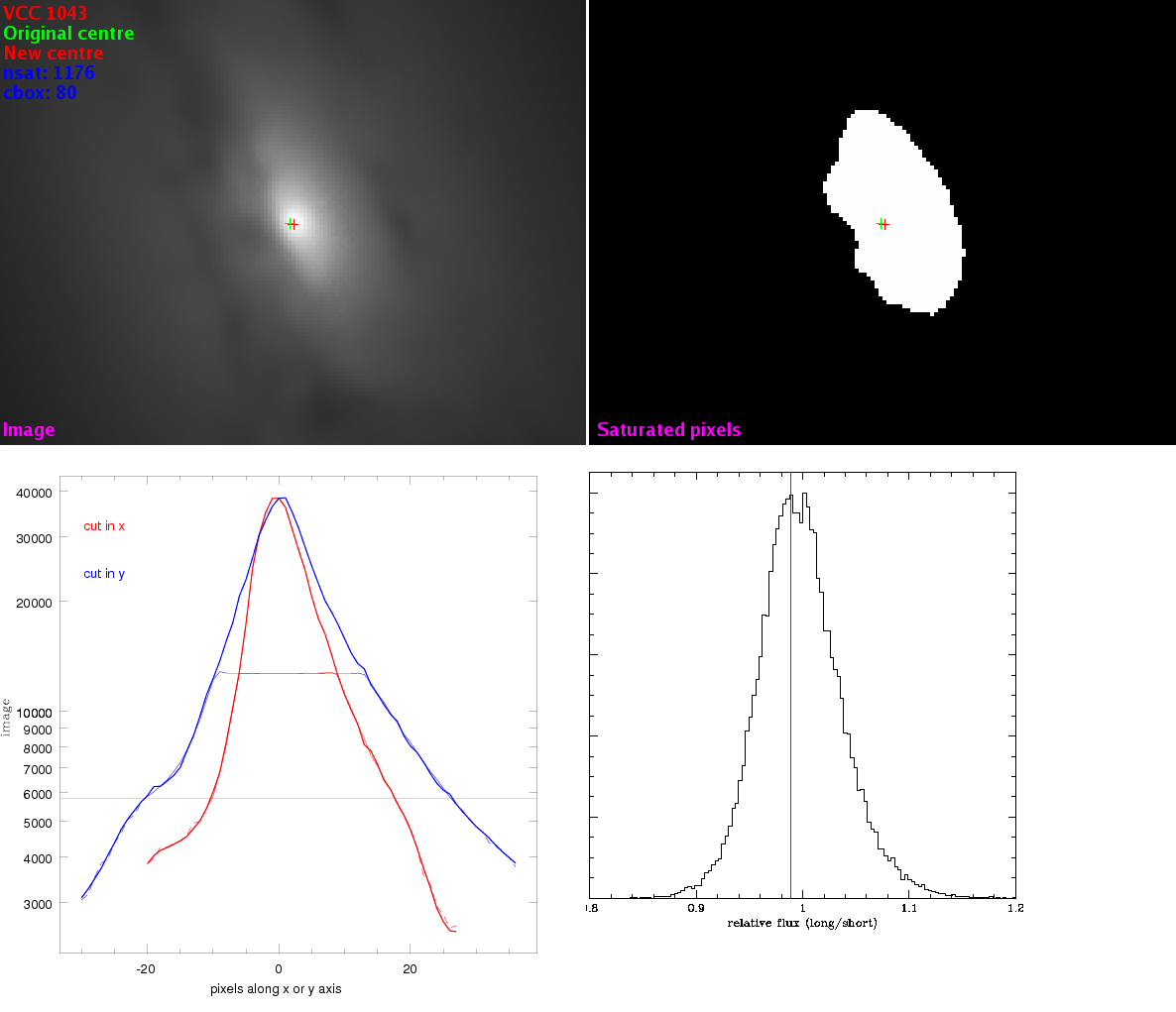

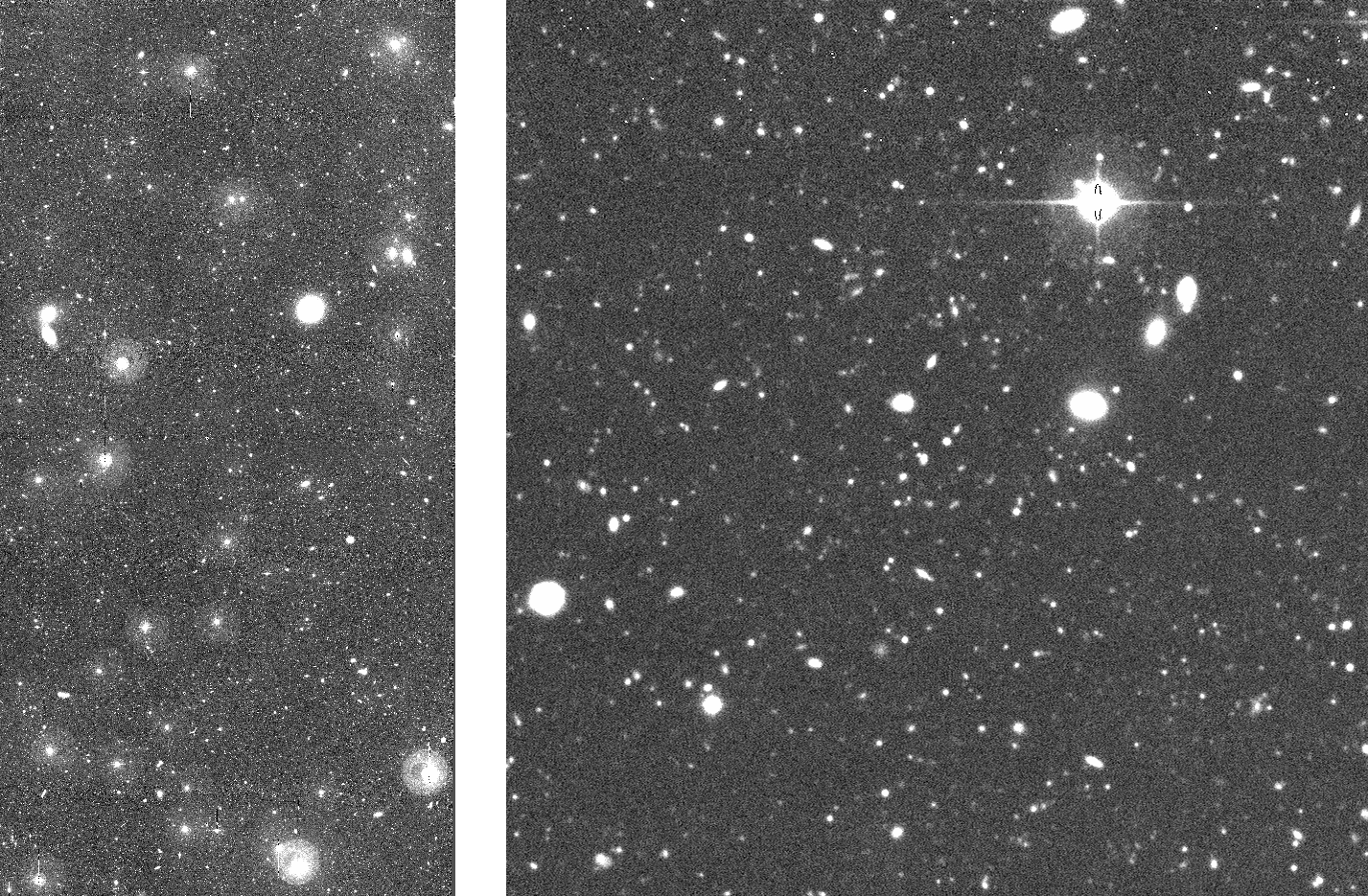

In the long exposures, the centres of bright galaxies are saturated. A procedure has been developped to replace the central pixels in the long exposures stacks with the equivalent pixels from the short exposure stacks. For each long stack, the saturation level is computed based on the scaling factors and sky levels of the input images. The adopted threshold is set at 50% of the lowest saturation level dictated by any of the input images, to avoid any non-linearity issues. A search is made for pixels exceeding this level in a box around each VCC galaxy in the image. If no saturated pixels are detected, the program moves on to the next galaxy. Most of the VCC galaxies are faint (or at least not centrally concentrated) and have no saturated pixels. If there are saturated pixels, typically, 20 or so pixels at most at very centre of the galaxy are saturated. If there is extended saturation, the search box grows. Once all the saturated pixels in the long exposure are identified, they are replaced by the corresponding pixels in the short image. Because the long and short stacks are already registered to 0.04 arcseconds or better, no shift is required. The photometric scaling is almost identical, since both stacks have the same zero-point. In some cases, a small correction is applied to the scaling of the short image on the order of 1-2%. This correction is computed by comparing the values the non-saturated pixels in the vicinity of the centre of the galaxy in question. The pixels in the long (.l.) image are then replace with the values of the short (.s.) image and the output is written to the merged (.m.) image. The weight and sigma images are also merged. This will cause a sharp drop in weight / increase in sigma near the centre of bright galaxies. These images are available via the regular graphical search tool, the cutout service as well as VOspace.